by Gary Mintchell | Jul 2, 2020 | Enterprise IT

The news in brief: New HPE GreenLake cloud services deliver an agile, lower cost, and consistent cloud experience everywhere.

We’re living in an as-a-service and edge-to-cloud world (to paraphrase the Material Girl). When Antonio Neri assumed leadership of the storied (at least part of the storied) Hewlett Packard, he grasped both that reality and that HPE had most of the tools to get there. A couple of acquisitions, a bolstered executive leadership team, and now the unveiling. There remain more work on the financial end for him, but I think HPE is positioned for growth in this arena.

Last year at Discover, HPE pushed the GreenLake idea on us. This year, it’s capabilities and possibilities are greatly expanded. And for my industrial / production readers–this applies as much to you as to Enterprise IT. It’s getting blurry at the Edge, apps like MES are moving to the cloud (actually, probably all have moved there), and the roles of Enterprise IT and Manufacturing IT are also blurring at the edges.

It’s a new world–and I don’t mean just post-Covid.

Following is from the release:

Hewlett Packard Enterprise today announced significant advancements to the company’s edge-to-cloud platform-as-a-service strategy, through next-generation cloud services and an accelerated delivery experience for HPE GreenLake. The new HPE GreenLake cloud services, which span container management, machine learning operations, VMs, storage, compute, data protection, and networking, help customers transform and modernize their applications and data – the majority of which live on premises, in colocation facilities, and increasingly at the edge.

“Now more than ever, given current market conditions, organizations have an urgent need to connect and leverage all of their applications and data in order to transform their businesses, support their employees, and serve their customers,” said Antonio Neri, President and CEO, Hewlett Packard Enterprise. “As we enter the next phase of the cloud market, customers require an approach that enables them to innovate and modernize all of their applications and workloads, including those at the edge and on premises. By delivering a consistent cloud experience everywhere through HPE GreenLake cloud services, and software designed to accelerate transformation, HPE is uniquely positioned to help customers harness the full power of their information, wherever it resides.”

Today, organizations are at a crossroads in their digital transformation efforts. According to IDC, despite the growth and adoption of public clouds, 70 percent of applications remain outside of the public cloud. Due to several factors, including application entanglement, data gravity, security and compliance, and unpredictable costs, organizations have struggled to move the majority of the applications that run their businesses to public clouds. Forced to support two operating models, organizations face additional costs, complexity and inefficiency, limited agility and innovation, and the inability to capitalize on information everywhere.

HPE delivers a unique approach to solving this dilemma by providing HPE GreenLake cloud services to customers in the environment of their choice – from edge to cloud – with a consistent operating model and with visibility and governance across all enterprise applications and data.

HPE GreenLake cloud services also provides customers with a superior economic model. Unlike public cloud vendors, which charge customers to get data back on premises, HPE charges no data egress fees. HPE GreenLake’s flexible as-a-service model and robust cost and compliance analytics tools allow customers to preserve cash flow, control spend, and prioritize investments that are aligned to business priorities.

“HPE GreenLake gives us 100% uptime, and the predictable pricing model is already helping us cut costs,” said Ed Hildreth, Manager of IT Distributed Systems, Mohawk Valley Health System. “Thanks to the cloud-like experience, when we needed to quickly activate additional features and resources in response to the COVID-19 pandemic, we were able to easily roll this out with no time delay. We are extremely pleased with HPE GreenLake and plan to leverage this model once again for new hospitals within our health system.”

Introducing New HPE GreenLake Cloud Services for Distributed Environments

HPE now offers cloud services for containers, machine learning operations, virtual machines, storage, compute, data protection and networking. All cloud services are accessible via a self-service point-and-click catalogue on HPE GreenLake Central, a platform where customers can learn about, price, and request a trial on each cloud service; spin up instances and clusters in a few clicks; and manage their multi-cloud estate from one place. They can all be deployed and run in the customers’ environment.

Based on pre-integrated building blocks, the new HPE GreenLake cloud services are now available in small, medium, and large configurations, delivered to customers from order to run in as few as 14 days. Partners and customers benefit from pre-configured reference architectures and pricing to speed time to consuming cloud services.

HPE GreenLake is one of the fastest-growing businesses in HPE with over 4 billion USD in total contract value

- Cloud services for Containers – These new HPE GreenLake cloud services, powered by HPE Ezmeral Container Platform, provide the flexibility to run containerized applications in data centers, colocation facilities, multiple public clouds, and at the edge.

- Cloud services for Machine Learning Operations – Through HPE GreenLake, customers can subscribe to a workload-specific solution built on the HPE Ezmeral Container Platform and HPE Ezmeral ML Ops for the entire ML lifecycle.

- Cloud services for Virtual Machines, Storage, and Compute – For customers who want a private cloud experience, HPE is launching HPE GreenLake cloud services for virtual machines, storage and compute. With provisioning of instances in five clicks, these easy-to-deploy services also provide visibility into usage and spend, and active capacity planning with powerful consumption analytics in the HPE GreenLake Central management platform.

- Cloud services for Data Protection – For customers looking to modernize data protection, HPE is making data backup and recovery effortless and automated for every SLA – from rapid recovery to long-term retention. These new cloud services through HPE GreenLake include secure and efficient on-premises backup and an enterprise cloud backup service, HPE Cloud Volumes Backup, which enables backup and recovery to/from the cloud without egress costs or lock-in, and with the agility to activate data for recovery, test/dev, and analytics.

- Cloud services for the Intelligent Edge – Today, more than ever, customers are looking to reduce CapEx to simplify their budget process and better predict and manage network operational costs. Aruba’s new Managed Connectivity Services, now available as cloud services through HPE GreenLake, provide the industry’s first complete Network as a Service offering, and bring cloud agility to the edge with the recently introduced Aruba ESP (Edge Services Platform).

by Gary Mintchell | Jun 26, 2020 | Events, Manufacturing IT, News

Antonio Neri, a scant two years into the position of President and CEO of Hewlett Packard Enterprise (HPE), laid out a vision and roadmap last year to make the company “the global edge-to-cloud platform as-a-service company” which develops and delivers “enterprise technology solutions and services that help organizations accelerate outcomes by unlocking value from all of their data, everywhere.”

This year he unveiled new executive leadership–one heading GreenLake, the as-a-Service portion of the vision, and the other heading Ezmeral, the newly defined software portfolio and direction–and showed how far the vision has come in a year.

I am impressed with the speed and consistency of this pivot.

HPE Discover, the annual conference usually held in Las Vegas, went totally online this year. This was my sixth or seventh of these within four weeks, and by far the best. Kudos to the communications team for pulling this all together. I attended as an Influencer, and the global leader assembled an outstanding group (except for me) and a good program for us to meet as much of the leadership as possible.

I will write indepth specifically about GreenLake and Ezmeral and sustainability and helping restart work following COVID-19–and perhaps most important for you, my industrial audience–Aruba Edge Orchestrator.

Why do I take all the time and effort to attend these enterprise and IT events?

Let me share one example. I sat in two sessions specifically talking industrial applications.

In the first, an engineering leader from Stanley Black & Decker spoke of his IoT project using HPE’s Edgeline compute platform at almost every level of the manufacturing stack. Edgeline is an industrialized version of HPE’s high performance compute platform. I have seen examples in previous Discover events from off-shore oil platforms and a refinery in Texas. This is one of the points where the reality of “IT/OT Convergence” hits home.

The other session featured HPE Fellow and VP of HPE Labs Dr. Tom Bradicich detailing the vision of data–from analog data (which he has been discussing with me since his days at NI several years ago) to the edge to the cloud.

You can visit HPE.com and register for the event for a while, yet. Click sessions and search for keywords such as IoT, edge, and so forth to watch the on-demand videos. Yet another benefit of the “virtual experience.”

[You can get these posts delivered to you via email by clicking the appropriate link and leaving your address. I prefer information pushed to me either by email or RSS feed, and I know many who do. I don’t sell or use your address. They remain buried somewhere in my WordPress CMS.]

by Gary Mintchell | Aug 18, 2018 | Asset Performance Management, Operations Management, Software

Who buys enterprise software applications, how and why? I ran across this article by a contact of mine, Gabriel Gheorghiu, Founder and principal analyst at Questions Consulting, with a background in business management and 15 years experience in enterprise software. I thought it would be most useful. I’m not an ERP analyst, but I have some background and training on the financial side of things. I think this analysis fits with other large-scale software acquisition projects, though, including MES/MOM, analytics, asset performance, and the like.

This will summarize some interesting points. I highly recommend reading the whole thing.

Before we begin, my brief take on enterprise software applications. How many of you have been involved with an SAP acquisition and roll out? How many happy people were there? Same with Oracle or any other ERP, CRM, MES, APM, etc. application. Why did using Microsoft Excel seem to go better?

Well, the big applications all force you to change all your business processes to fit their template. You build Excel to fit what you’re doing. It’s just not powerful enough to do everything, right?

Gheorghiu conducted interviews with 225 companies who were all looking for enterprise resource planning (ERP). The goal of this survey was simple – listen and learn from what these companies had to say about their individual decision-making strategies. We all agree that this is not a simple task. But we also agree that selecting the best ERP software is a critical factor for business success.

Here is why the research phase of this process is considered to be so vital:

- It has the greatest impact on all the subsequent phases and consequently, your final decision.

- Research begins at home – in other words, the first step is to determine your company’s specific and unique needs.

- Once your company has thought through and determined its software requirement, then and only then does the process to evaluate vendors and their offerings begin. This can be a very challenging step because many companies are not equipped with the time, knowledge, or tools to perform this step.

Buyer Profiles: Who’s Looking for ERP and Why?

One problem for analysis is that many are not doing business in just one industry. The breakdown of companies in our business sample, by industry, was as follows: manufacturing (47%), distribution (18%), services (12%), construction (4%), retail (3%), utilities (3%), government (3%), healthcare (3%), and other (10%). However, to complicate matters a little, 20% of manufacturers also manage distribution and some distributors include light manufacturing in their operations, like assembly.

“Companies looking to invest in business software may very well be addressing this additional challenge – looking for a comprehensive package that integrates all aspects of a business. ERP software systems are powerful and comprehensive but are not necessarily known for their agility and ability to accommodate many disparate functions.”

Gheorghiu identifies as a strong influencer consumerization, which changes focus from organization-oriented offerings to end-user focused products. “This was a highly significant turning point in the IT marketplace. By developing new technologies and models that originate in the consumer space rather than in the enterprise sector, software producers opened up the market to a flood of small and medium-sized businesses looking for more cost effective, and less complicated solutions to run their businesses.”

The consumerization of software (as noted above) has precipitated the move by many companies away from enterprise IT towards more streamlined and user friendly consumer-oriented technology. This change is equally relevant for ERP software and manufacturing companies have participated in this very significant development, albeit more cautiously and slowly than SMBs.

Most industries follow a “purposeful implementation” strategy, managing software adoption as a series of “sprints in a well-planned program” rather than insisting on the “all or nothing” approach.

For example, a small company looking to invest in software might decide to begin with an accounting system which can be used alongside point solutions and spreadsheets. As companies grow and their transactions become more complex, they may find that they have also outgrown their initial software selections.

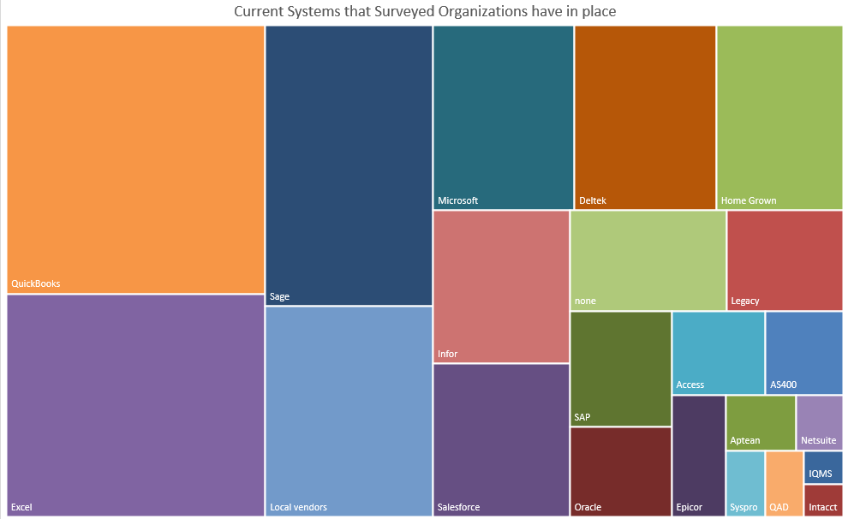

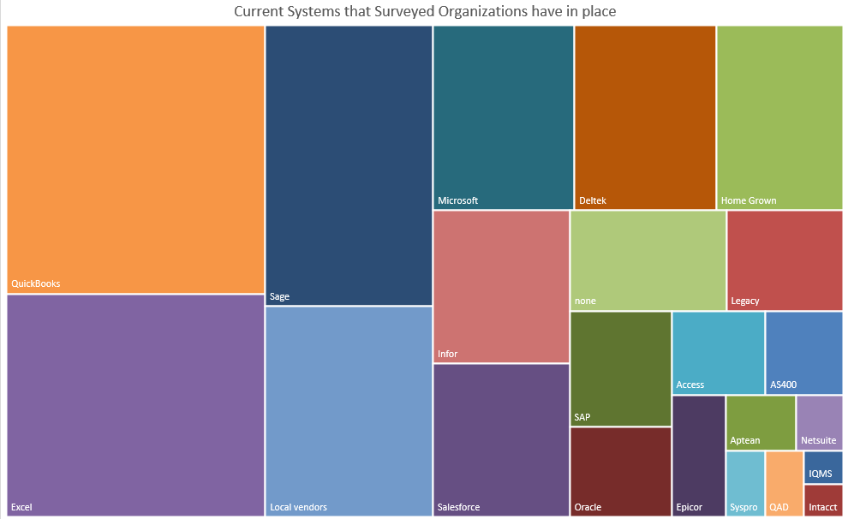

The chart below provides a visual analysis of the mix of software that is currently utilized by our business sample:

Some relevant comments we extracted from our survey included:

- The CEO of a small services company mentioned that he was “tired of the hodgepodge of systems”

- A manufacturer considered their current arrangement to be “very siloed.” Reconciling the inventory balance is a “constant battle.”

Buyer Behavior: How are Companies Approaching ERP Selection?

The selection process is most successful when companies adhere to some basic selection rules: involve as many direct stakeholders as possible and keep business priorities and strategies firmly in mind when making the final decision.

Feature Functions

A software change can trigger a vast administrative upheaval within the company. It is important to carefully analyze the business case for the change and whether it supports the level of disruption as well as the implementation time and spending that will be required. Even if the change may be entirely justified, a well thought out analysis is well worth the time and effort.

The Vendors in the Spotlight

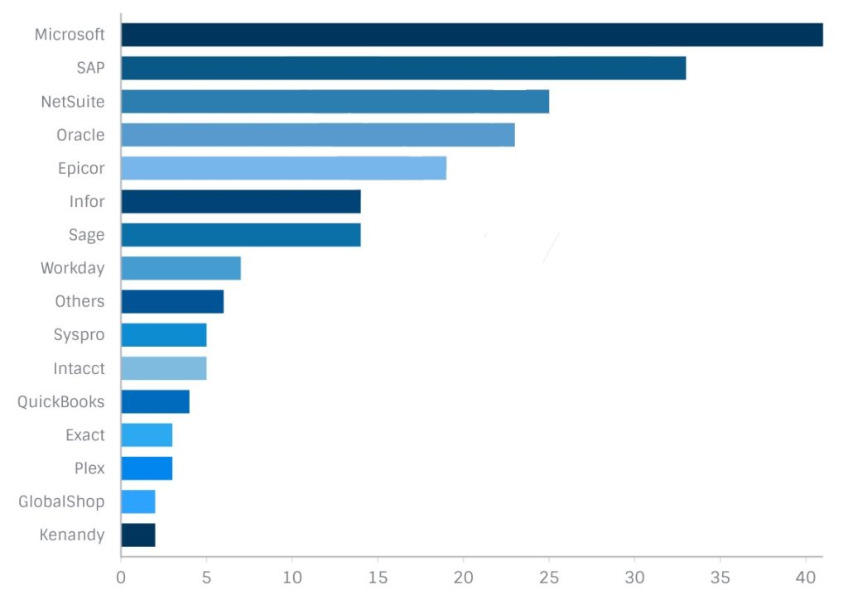

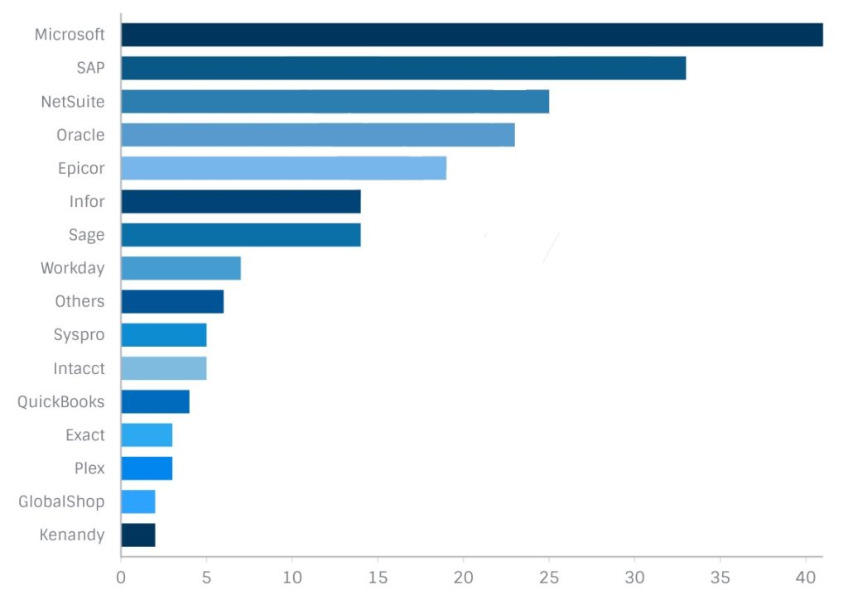

According to our survey results, the chart below identifies the vendors under consideration by the companies surveyed. A majority of companies (53%) were not, for the moment, looking at specific vendors. However 47% of respondents had narrowed their search to specific vendors.

Who’s Involved in this Decision Selection Process?

Our sample results indicate that the people in charge of the selection process are distributed as follows: employees in the finance and accounting departments (23%), IT department employees (23%). The other important categories were independent consultants helping companies with the selection process (17%), operations managers (17%) and presidents or CEOs (12%). It is worthwhile mentioning that project managers and business analysts only made up 5% of the total.

By far, the most effective method of choosing a software is to employ a collaborative system whereby the actual stakeholders of that system (the end-users) have a direct voice in the decision outcome. As the front-line users of the system, their insight and knowledge is very valuable. Their input along with all the other stakeholders input will produce the best possible outcome of this process.

An ERP system is a major business investment and is best handled with the appropriate amount of time and diligence given to the process.

The advent of cloud computing has indeed radically changed the landscape for deployment of business software. According to a recent press release by Gartner, “by 2020, a Corporate “No-Cloud” policy will be as rare as a “No-Internet” policy is today”. In other words, cloud deployment will become the default by 2020.

Our survey results, in fact, support Gartner’s analysis. Ninety-five percent of companies responded that they were open to a cloud deployment model, while just over 50% were willing to also consider on premises ERP. Of this latter group of respondents, 65% of them were manufacturers and distributors. This makes sense of course, given that these industries made significant investments in hardware and IT personnel and may not be as ready or as willing to move to the cloud model.

As for the preference for cloud computing (as demonstrated by our responses), we argue that it reflects the very strong tendency in the market to opt for simpler, more streamlined and less expensive computing solutions. As more information and assurances of security and stability by cloud providers enter the marketplace, more and more businesses will be convinced that the many benefits of the cloud outweigh some of their remaining concerns. Gartner’s prediction that cloud will increasingly be the default option for software deployment looks to be right on course.

Conclusion

An important consideration for companies embarking on an ERP software selection process – the average lifespan of an ERP system is approximately 5 to 10 years. If we consider important factors like the investment of capital, time, and loss of productivity that the selection and replacement of an ERP system requires, perhaps all companies would be more willing to invest the necessary effort in this process.

by Gary Mintchell | May 19, 2017 | Automation, Internet of Things, Manufacturing IT, Operations Management, Software

Software platforms, Internet of Things, Digital Transformation and many more manufacturing technologies brought 225,000 people to Hannover last April. I think I got the last available hotel room in the Hannover as I prepared for an intense three days of meetings. I’ve written a couple of posts already. But there is much more. My trip to Dell EMC World out of the way, I’m back to finishing some Hannover thoughts.

Check out these posts on IoT Platform Architecture, Augmented Reality, and a review of IoT platforms.

ABB and IBM partner for industrial artificial intelligence

ABB and IBM announced a strategic collaboration that brings together ABB’s ABB Ability with IBM Watson.

Customers will benefit from ABB’s deep domain knowledge and extensive portfolio of digital solutions combined with IBM’s expertise in artificial intelligence and machine learning as well as different industry verticals. The first two joint industry solutions powered by ABB Ability and Watson will bring real-time cognitive insights to the factory floor and smart grids.

“This powerful combination marks truly the next level of industrial technology, moving beyond current connected systems that simply gather data, to industrial operations and machines that use data to sense, analyze, optimize and take actions that drive greater uptime, speed and yield for industrial customers,” said ABB CEO Ulrich Spiesshofer. “With an installed base of 70 million connected devices, 70,000 digital control systems and 6,000 enterprise software solutions, ABB is a trusted leader in the industrial space, and has a four decade long history of creating digital solutions for customers. IBM is a leader in artificial intelligence and cognitive computing. Together, IBM and ABB will create powerful solutions for customers to benefit from the Fourth Industrial Revolution.”

Another quick note about ABB. It has been a leader in high voltage DC technology (HVDC). At Hannover it announced the latest development in high voltage direct current (HVDC) Light making it possible to reliably transmit large amounts of electricity over ever greater distances, economically and efficiently. The next level of ABB’s HVDC Light will enable more than doubling the power capacity to 3,000 megawatts (MW).

“We pioneered HVDC technology in the 1950’s as a game changer, and the birth of HVDC Light in 1997 was one of the most significant milestones in our innovation journey” said Claudio Facchin, President, ABB Power Grids. “As we mark 20 years of this breakthrough, we are ready to write the next chapter of this technology, with significant enhancements that will help transmit power further with minimum losses and bring major benefits to our customers. HVDC is a cornerstone of our Next Level strategy, reinforcing our position as a partner of choice in enabling a stronger, smarter and greener grid.”

GE Digital

I had several discussions with GE Digital and GE Automation & Control. Here is an announcement from GE.

GE Digital announced a major release of its Plant Applications Manufacturing Execution System (MES) solution for hybrid manufacturing industries, designed to manage highly automated production processes. This new version features a new user interface, using GE’s advanced UX design, to better enable operations staff to analyze equipment effectiveness and identify root causes of downtime. The first phase of the Plant Applications user interface enhancement makes it easier for plant personnel to utilize MES systems in their day to day work.

Plant Applications holistically automates and integrates data collection from assets on the plant floor used to manage production execution and performance optimization in hybrid manufacturers in industries such as Food & Beverage, Consumer Packaged Goods and Chemical.

And from GE Automation & Controls, it announced its Control Server and Control System Health App. These innovations are a part of GE’s Industrial Internet Control System (IICS). IICS is an Industrial Internet of Things (IIoT) solution that reliably, safely and securely connects thousands of machines to the power of the cloud and brings computing to the edge.

Utilizing GE’s Field Agent platform, Control Server also enables intensive optimizing apps like Model-based Optimizing Control (MBOC) to inject performance improvements that deliver greater profitability. In addition, the operating and maintenance costs are reduced through consolidation of PC functions provided by virtualization technology on a server-grade platform. With built-in security features, this innovation reduces the cyber-security attack surface and improves compliance with industry regulations.

As part of the industrial app economy, GE also launched the Control System Health App which allows customers to monitor the status of their control hardware from any location with internet access. The app collects real-time data in a time-series database and uses the power of analytics to recommend corrective actions based on faults.

“We are excited to unveil the next round of powerful analytics tools as part of our IICS system,” said Rob McKeel, President and CEO of GE’s Automation and Controls business. “These two innovations will not only help our customers continue to optimize business and asset performance, but now, with the app, they’ll be able to check in on their system in the palm of their hands from anywhere in the world.”

Honeywell Introduces IIoT SDK Utilizing OPC UA

Honeywell Process Solutions announced a software toolkit that simplifies the interconnection of industrial software systems, enabling them to communicate with each other regardless of platform, operating system or size. The Matrikon FLEX OPC Unified Architecture (OPC UA) Software Development Kit (SDK) is ideal for applications where minimal memory and processing resources are common.

“Honeywell Connected Plant is our holistic approach to anticipating and meeting the needs of customers by leveraging the power of the IIoT,” said Shree Dandekar, vice president and general manager, Honeywell Connected Plant. “Within this environment, OPC UA plays a key role in enabling outcome-based business solutions. Our introduction of Matrikon FLEX underscores the importance of this technology.”

Tom Burke, president and executive director, OPC Foundation, commented, “In order to quickly and efficiently implement OPC UA, suppliers need a toolkit to minimize development time and effort, and deliver secure and reliable products. Honeywell’s new SDK is ideal for companies getting started with OPC UA to take advantage of the growth of the IIoT. It provides a way to launch OPC UA-enabled products faster and with fewer changes.”

Parker Hannifin Unveils Voice of the Machine

Parker Hannifin Corp. unveiled the Voice of the Machine IoT platform, an open, interoperable and scalable ecosystem of connected products and services.

“From online platforms that enable users to engage with our broad portfolio of products, systems and engineering talent; to global monitoring and asset integrity management services that keep critical systems productive, we are creating better outcomes for our customers,” said Bob Bond, Vice President – eBusiness, IoT and Services. “Our Voice of the Machine offering operates at the sweet spot of our core competency at the component and system level. Parker is creating discrete insights across our broad range of motion and control products that we can then connect to enterprise IoT solutions.”

Parker is using a center-led approach and has adopted a common set of IoT standards and best practices for use across all its operating groups and technologies. Every connected product uses the same repository of digital services with an exchange-based platform architecture, designed by software experts at Exosite. The Exosite IoT architecture makes it easy to deploy a diverse set of connected solutions leveraging that same set of digital services and to integrate.

ODVA Launches Project to Develop Its Next Generation Platform for Device Description

ODVA has announced that it has embarked on a major new technical activity to develop standards and tools for its next generation of digitized descriptions for device data. ODVA has named the activity “Project xDS.” The project will focus on the development of specifications for workflow-driven device description files for device integration and digitized business models.

Project xDS will define the technologies and standards for “xDS” device description files that are based on a common format and syntax to enable workflow-driven device integration. Typical workflows include network and security configuration, network and security diagnostics, device configuration, and device diagnostics.

Another aspect of Project xDS is to further the realization of applications for a digitized industrial world. Digitization will require the virtual representation of physical devices as digital twins, and xDS device description files will be able to provide the device data needed for this virtual representation. The result will help enable services for configuration, command, monitoring, diagnostics, prognostics and simulation via asset management systems, cloud based analytics, and new command-control architectures for industrial control systems.

by Gary Mintchell | Aug 10, 2015 | Automation, Internet of Things, Interoperability, News, Technology

During NI Week last week in Austin, Texas, IBM representatives discussed some news with me about a new engineering software tool the company has released – called Product Line Engineering (PLE) — designed to help manufacturers deal with the complexity of building smart, connected devices. Users of Internet of Things (IoT) products worldwide have geographic-specific needs, leading to slight variations in design across different markets. The IBM software is designed to help engineers manage the cost and effort of customizing product designs.

You may think, as I did, about IBM as an enterprise software company specializing in large, complex databases along with the Watson analytic engine. IBM is also home to Rational Software—an engineering tool used by many developers in the embedded software space. I had forgotten about the many engineering tools existing under the IBM umbrella.

They described the reason for the new release. Manufacturers traditionally manage customization needs by grouping similar designs into product lines. Products within a specific line may have up to 85% of their design in common, with the rest being variable, depending on market requirements and consumer demand and expectation. For example, a car might have a common body and suspension system, while consumers have the option of choosing interior, engine and transmission.

Product Line Engineering from IBM helps engineers specify what’s common and what’s variable within a product line, reducing data duplication and the potential for design errors. The technology supports critical engineering tasks including software development, model-based design, systems engineering, and test and quality management—helping them design complex IoT products faster, and with fewer defects. Additional highlights include:

- Helps manufacturers manage market-specific requirements: Delivered as a web-based product or managed service, the IBM software can help manufacturers become more competitive across worldwide markets by helping them manage versions of requirements across multiple domains including mechanical, electronics and software;

- Leverages the Open Services for Lifecycle Collaboration (OSLC) specification: this helps define configuration management capabilities that span tools and disciplines, including requirements management, systems engineering, modeling, and test and quality.

Organizations including Bosch, Datamato Technologies and Project CRYSTAL are leveraging new IBM PLE capabilities to transform business processes. Project CRYSTAL aims to specify product configurations that include data from multiple engineering disciplines, eliminating the need to search multiple places for the right data, and reducing the risk associated with developing complex products.

Dr. Christian El Salloum, AVL List GmbH Graz Austria, the global project coordinator for the ARTEMIS CRYSTAL project, said, “Project CRYSTAL aims to drive tool interoperability widely across four industry segments for advanced systems engineering. Version handling, configuration management and product line engineering are all extremely important capabilities for development of smart, connected products. Working with IBM and others, we are investigating the OSLC Configuration Management draft specification for addressing interoperability needs associated with mission-critical design across multi-disciplinary teams and partners.”

Rob Ekkel, manager at Philips Healthcare R&D and project leader in the EU Crystal project, noted, “Together with IBM and other partners, we are looking in the Crystal project for innovation of our high tech, safety critical medical systems. Given the pace of the market and the technology, we have to manage multiple concurrent versions and configurations of our engineering work products, not just software, but also specifications, and e.g. simulation, test and field data. Interoperability of software and systems engineering tools is essential for us, and we consider IBM as a valuable partner when it comes to OSLC based integrations of engineering tools. We are interested to explore in Crystal the Product Engineering capabilities that IBM is working on, and to extend our current Crystal experiments with e.g. Safety Risk Management with OSLC based product engineering.”

Nico Maldener, Senior Project Manager, Bosch, added, “Tool-based product line engineering helps Bosch to faster tailor its products to meet the needs of world-wide markets.”

Sachin Londhe, Managing Director, Datamato Technologies, said, “To meet its objective of delivering high-quality products and services, Datamato depends on leading tools. We expect that product line engineering and software development capabilities from IBM will help us provide our clients with a competitive advantage.”